Yep. You read the title right.

If you’re making a few (very common) A/B testing mistakes, you’re ruining your website.

Not inherently, of course. More like in an Eric the Red sort of way.

Quick history lesson: Eric the Red claimed and named Greenland. He was banished from Iceland and got stuck on a horrible, cold land mass (Greenland).

He hated it. So he went back to Iceland and pimped out this warm (lie) lush (lie) land called Greenland that everyone should go to. Consequently, those settlers believed him and spent the rest of their lives in misery eating ice (probably).

It was the misinformation that duped the settlers. And it’s the same misinformation from A/B tests that can eat up your precious time and money.

So why do A/B testing if there’s a chance for error. You do it because this can happen:

Our Welcome Mat for this guide converts at 8.86%. But we A/B tested with a video version of that mat, and we raised our conversion rate to 13.7%.

We’ll easily get 30,000 visitors to that guide. If we never A/B tested our Welcome Mat, we’d convert 2400 people.

However, because we ran a test, we found a version that will convert 3900 people.

That’s 1500 extra people on our list just because we ran an A/B test.

So it all comes down to the information you derive from the A/B tests. The bad news is there are 19 ways A/B testing can tank your conversions. If you’re running A/B tests, you may be committing one of these 19 errors, which lead to:

- Wasted time and money

- Fewer email addresses collected, leading to decreased sales and revenue

- A user experience nightmare for your visitors

So let me show you the 19 ways A/B testing is ruining your site. And don’t worry. I won’t leave you hanging – I’ll also show you how to fix these common issues.

- A/B TEST INFOGRAPHIC

- A/B Test Mistake #1: You Called It Too Early

- A/B Test Mistake #2: You Set Up The Test Wrong

- A/B Test Mistake #3: You Called It Too Late

- A/B Test Mistake #4: You Tested Too Much

- A/B Test Mistake #5: You Ran Too Many Tests On A Page

- A/B Test Mistake #6: You Tested The Variation Without The Control

- A/B Test Mistake #7: You Made A Generalization

- A/B Test Mistake #8: You Installed Your A/B Test Tool Wrong

- A/B Test Mistake #9: You Tested Something Insignificant

- A/B Test Mistake #10: You Didn’t Outline Your Goal

- A/B Test Mistake #11: You Didn’t Prioritize

- A/B Test Mistake #12: You Ignored The Data

- A/B Test Mistake #13: You Didn’t Settle For The Small Win

- A/B Test Mistake #14: You Tested Too Much In The Funnel

- A/B Test Mistake #15: You Didn’t Give The Variation A Chance

- A/B Test Mistake #16: You Mis-Read The Results

- A/B Test Mistake #17: Your Test Copy Was Too Generic

- A/B Test Mistake #18: You Chose A Bad Testing Period

- A/B Test Mistake #19: You Didn’t Enjoy The Victory

The 19 A/B Testing Mistakes That Ruin Your Website

A/B Test Mistake #1: You Called It Too Early

We’ve all been there. You run a A/B test, and after two days one of the variations is straight-up stomping the bejeezus out of your control (the thing you’re testing against).

Your pulse elevates, hands get a little sweaty and your mouse hovers over the “Stop Test” button because, praise be, you’ve got a winner!

Don’t stop the test.

Chances are you’re stopping the test too early. I can’t begin to tell you how many A/B tests are performed where the variant (the change you’re testing against the control) surges out to a lead…only to be caught and passed by the control later on.

If you switch to the variant too early, you could actually lose conversions in the long run.

The Solution:

Put thine faith into statistical significance.

- "Statistical significance is attained when a p-value is less than the significance level (denoted α, alpha). The p-value is the probability of obtaining at least as extreme results given that the null hypothesis is true whereas the significance level α is the probability of rejecting the null hypothesis given that it is true."

Yeah. That’s not hard to understand at all.

Basically, statistical significance is a formula that determines if a result was obtained due to chance or fact. The higher the percent, the more likely the result was the real deal.

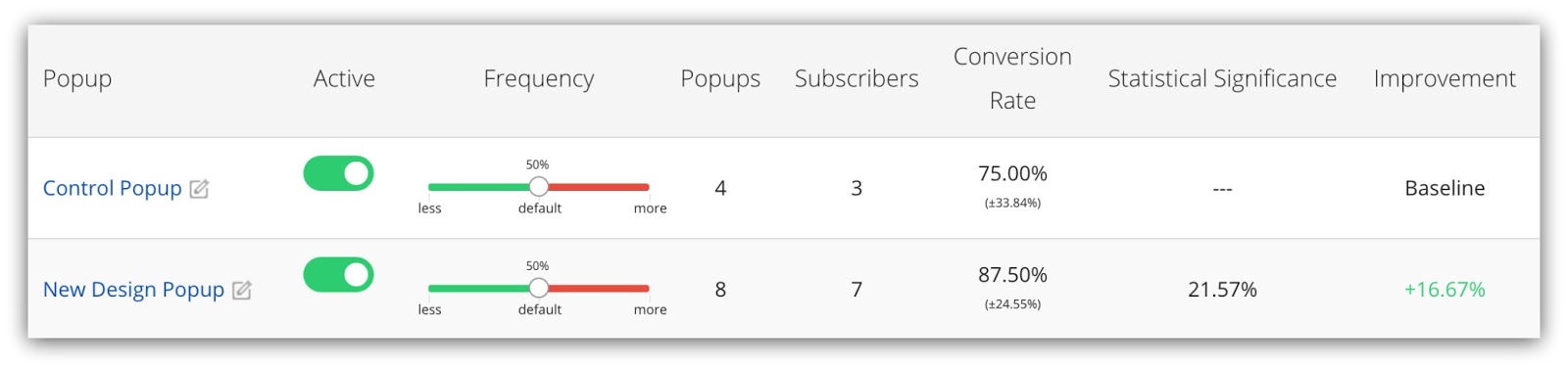

Here’s an example of statistical significance from Sumo:

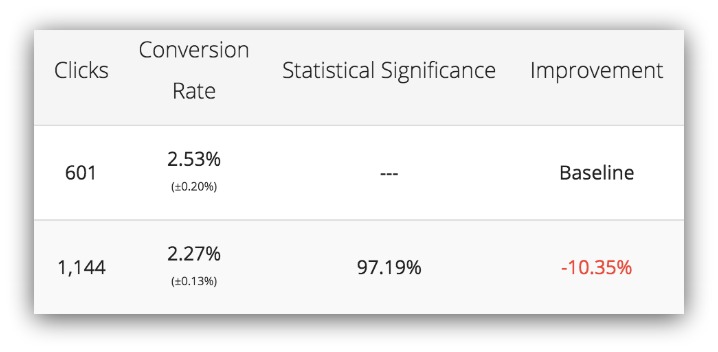

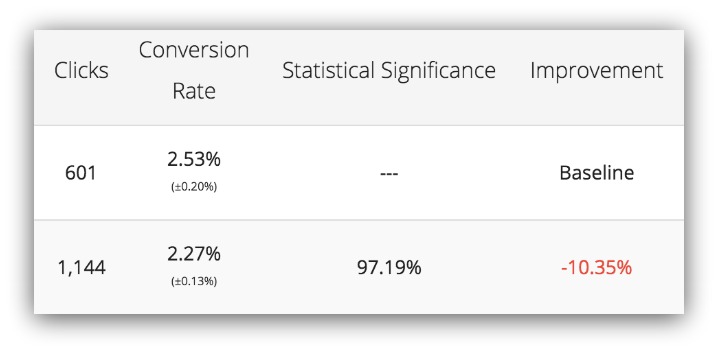

Focus on the percentage in the third column. That’s the statistical significance percentage. It goes all the way to 100%, but you’ll rarely hit that sort of statistical significance.

Your goal should be to get over 95% confidence on every test. Once you’re over 95%, it’s essentially saying there’s only a 5% chance your results were random.

You see the varying range of statistical significance spread across our tests.

- The default option has less than a 1% chance of being valid.

- The Popup #1 – No URL option has an 82% chance of being valid.

- The WINNING POPUP (thusly named after the test) has a 99.95% chance of being valid.

At 99.95%, we can determine that WINNING POPUP’s results are the most valid in the test group.

Key Takeaway: Aim for your own tests to get above the 95% mark before you declare the winner.

A/B Test Mistake #2: You Set The Test Up Wrong

Pop quiz:

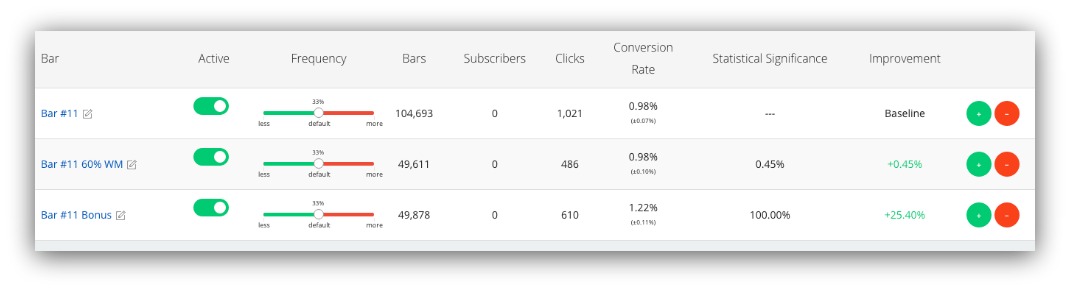

What’s wrong with this test?:

The two variables have almost identical traffic. The conversion rates are close.

Yet the variants differ wildly in statistical significance.

The first variant has a .45% statistical significance, yet the second variant has a 100% statistical significance.

Alright, I won’t make you keep guessing. That’s because a variable was introduced later in this test.

If you run a test and introduce a new variable, you can almost guarantee the new variable won’t hit statistical significance.

The Solution:

Start every new A/B test from scratch.

Statistical significance measures the validity of a current test. When you introduce a new variant, it isn’t treated as part of the original test.

If you want to ensure statistical validity every single time, you need to start a new test every time you want to introduce a new variant:

When you start from scratch and put all every variant on the same level as the control, you have a higher chance of seeing which variant actually reaches statistical significance.

Key Takeaway: If you want to test something new, start a completely new test. Don’t introduce a variant into an existing test.

A/B Test Mistake #3: You Called It Too Late

I know what you’re thinking. “Damn, Sean, you just said don’t stop them too early. Now you’re saying don’t let them run too long. Are you the kind of dude that takes 20 minutes to pick an ice cream at Coldstone?”

Nope. Takes me one minute because I like chocolate with gummi bears. And I’m also saying you can run a A/B test for far too long.

Why’s that bad? You end up wasting time. In that time, you could be starting a whole new series of tests.

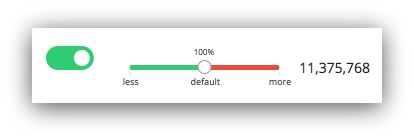

This is a A/B test that ran 11,375,768 times. It took five months to get to that number.

I guarantee you that’s far too long. They could have reached statistical significance long before that. That wasted time means less tests run, which straight up leads to missed revenue.

The Solution:

Know what you need to get statistical significance.

There are two ways you can do this:

- Wait around and check the statistical significance each day.

- Have a plan and work backwards from it.

The first option is perfectly fine, except your stats can be thrown off. Some tools claim a statistical significance after 100 visits (which is bad). Some sites can get 100,000 visitors in a day.

Neither of these options factor in time. That’s why you need to set a goal and work backwards from it.

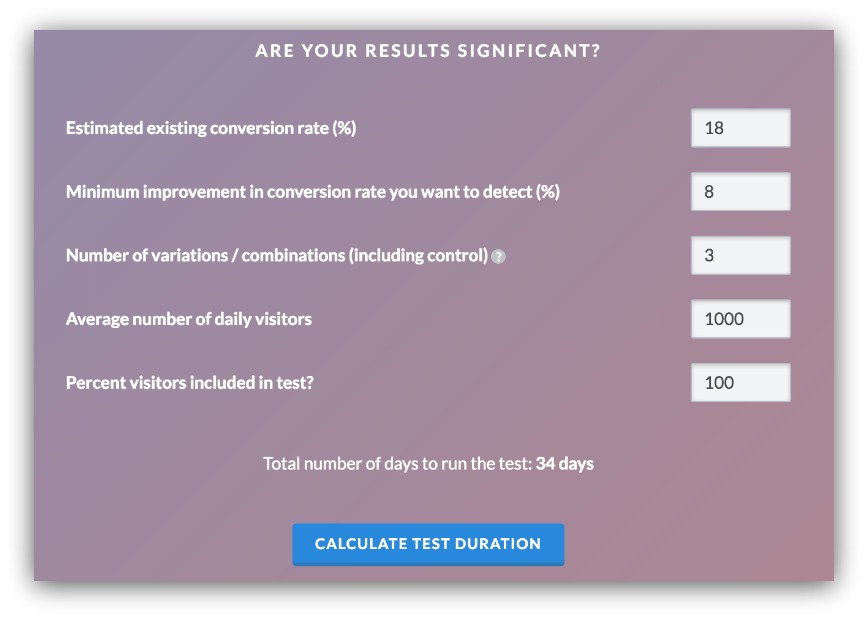

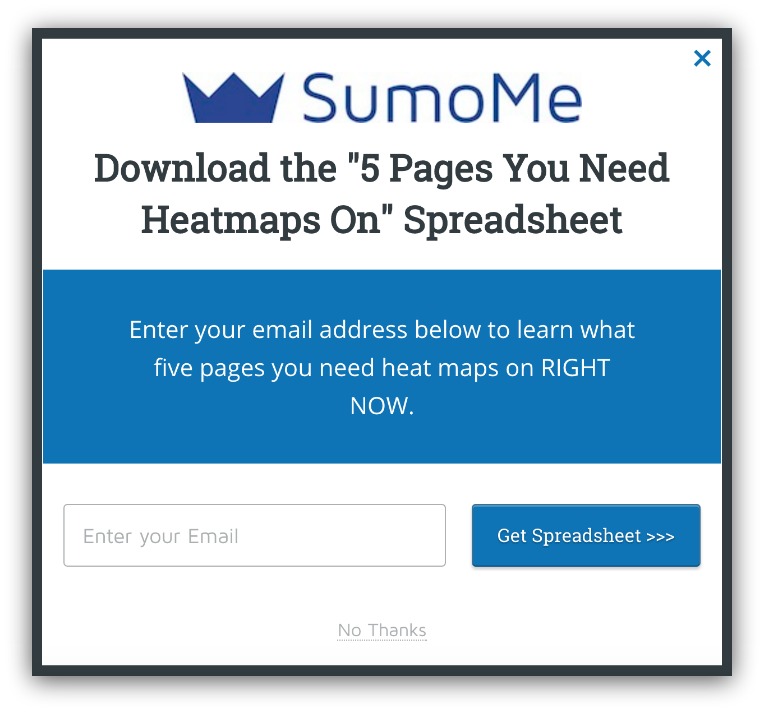

This great tool helps you set a gameplan for how long you need to run your A/B test.

You can see it asks for a couple important things:

- Existing Conversion Rate: What percentage is your control already converting at?

- Minimum Improvement in Conversion Rate: How much improvement do you want to see (hint: don’t set this crazy high — temper your expectations)?

- Variations: Including the control, how many variables are you testing?

- Daily Visitors: On average, how many people visit that page per day?

- Percent Included in Test: What portion of your audience will see this test?

If we use my image as an example, you’ll see I’m shooting for at least an 8% bump in conversion rate with three tests and 1,000 visitors per day.

To see that kind of growth, you need to run this test for 34 days. In roughly a month I’ll have the results I’m looking for.

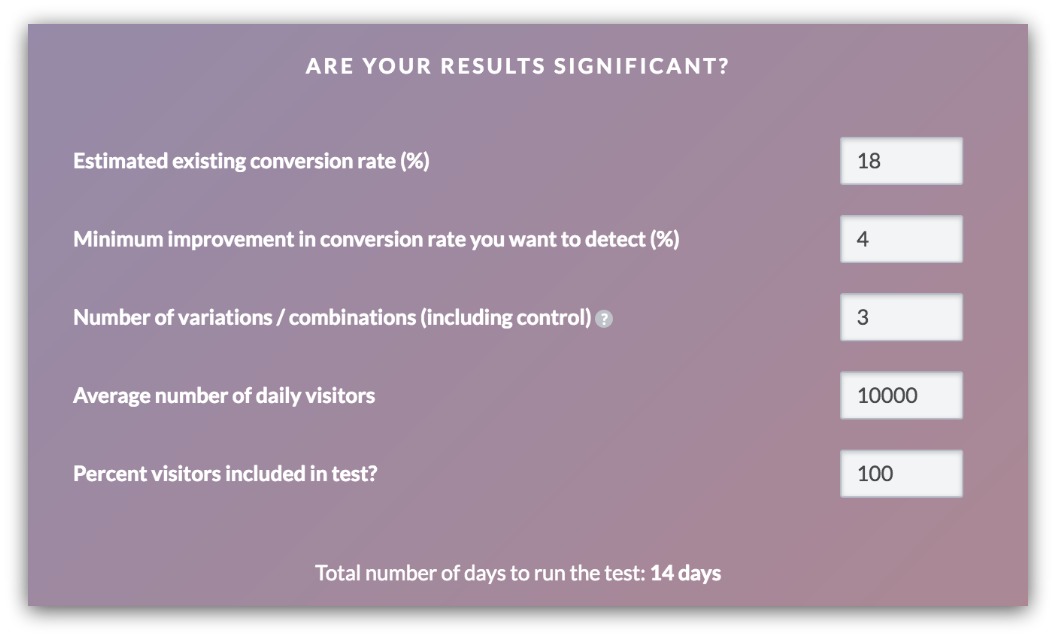

If I wanted a mere 4% conversion rate increase and I had a larger audience, I’d need only 14 days for the test:

Key Takeaway: By having goals and working backward from them, you guarantee you don’t waste time by running a test too long.

A/B Test Mistake #4: You Tested Too Much

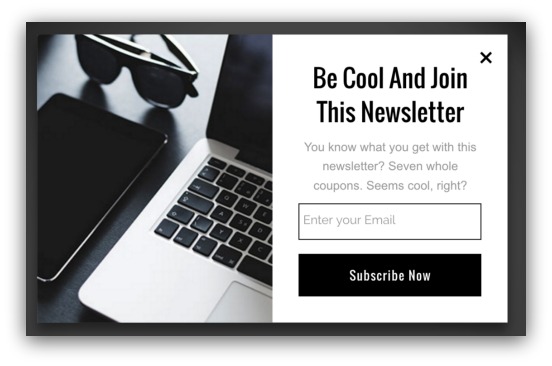

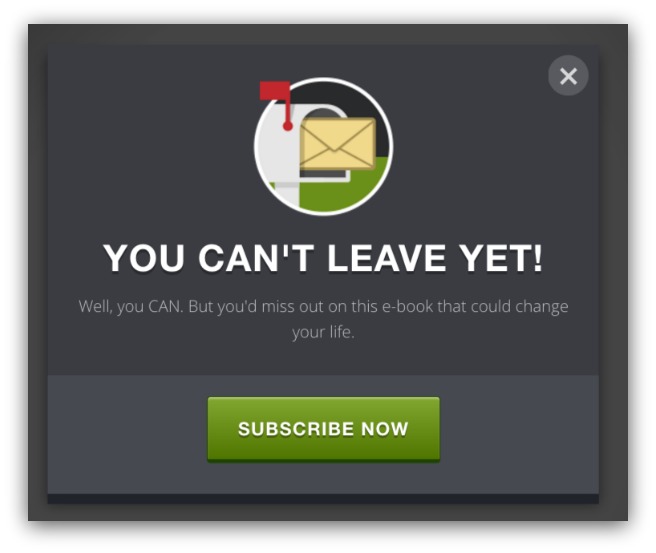

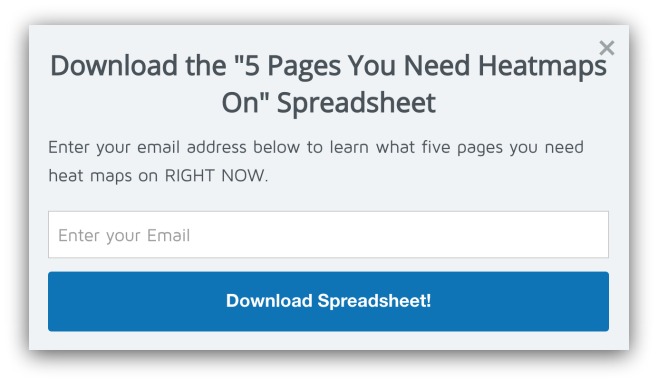

This is the most common mistake made when you first start A/B testing. You’re excited to optimize your conversions and you create two different List Builder popups for your blog homepage. This:

And this:

You run the tests and one of them is the clear winner. AWESOME! You proudly show it to a coworker or friend and they ask the question that shatters your euphoria:

“So what made it work?”

Uh…crud. Check out those two examples again. The differences are staggering. They’re two entirely different formats with different colors, headlines, sub-headlines and form fields.

You changed up too much. So now you don’t know what worked.

The Solution:

Only make one change per A/B test.

Not two. Not three. One.

You can always create multiple tests against a control with one change per test. For example, one test may have a different headline, while another test might change the subhead. It’s important to change only one thing per test to determine what actually works.

Here’s a test I’m running right now on Sumo:

The control

The test

The headline, call to action, and subhead remained the same. The only thing I changed was the layout. If the test ends up winning (with statistical significance) then I can safely say the layout led to increased conversions.

Key Takeaway: Change one thing per test to know what caused the increase in conversions.

A/B Test Mistake #5: You Ran Too Many Tests On A Page

This is the ugly cousin of testing too many things.

A/B testing is kind of like gambling. A few tests may lose, but once you get that big winner you feel like you need to go on a A/B testing spree.

So you run one A/B test on that page. Then another. And another. Pretty soon you’re 15 tests deep on a page and you’re consistently seeing conversion gains of .3%.

Congrats. You’ve fallen victim to opportunity cost and local maximum.

-

Opportunity Cost: This is the cost of an alternative that must be forgone in order to pursue a certain action. When you spend that much time on a page, you’re giving up time optimizing other pages.

-

Local Maximum: In the world of design, this term means hitting the peak of your current design. From that point on, no matter what you do, the gains will be miniscule because you’ve achieved as much as you can on that current design.

The local maximum is the key here. Once you start to see small returns on your page after a few A/B tests, you can assume you’ve hit local maximum.

The Solution:

Adjust your goals beforehand.

Remember that tool we used to determine how long you should run your A/B test? There was a section that asked what your expected conversion rate increase was:

For each test, make sure you stand by that goal. Once those goals start to dip below the trade-off value (your time and money vs. amount of leads or sales you increase) then it’s time to move on.

Key Takeaway: Know when your page has hit local maximum and move on to a new page.

A/B Test Mistake #6: You Tested The Variation Without The Control

I know. This seems crazy. But I swear it happens.

Instead of actually, you know, testing the variants before putting them in place permanently, some folks like to go ahead and delete the original and publish the new variant.

Here’s the thought process behind that: they make the change, then watch their core analytics to see if they get more conversions.

But that’s missing the point. Something can get conversions on its own, but you need to test it simultaneously against the original to truly see which one is best.

The Solution:

Actually run an A/B test.

Shocking, I know. I won’t spend much time on this, but you need to test things out before you push them live. If you don’t, you risk hurting your conversions AND jarring your users.

Look how easy it is to set up a A/B test:

Just a couple clicks and boom, there’s a fully functional A/B test between two List Builder popups.

Key Takeaway: Run an actual A/B test. Please.

A/B Test Mistake #7: You Made A Generalization

Imagine this: you run a A/B test, and your variant sees a 30% increase in conversions over the control!

Time to retire to luxurious South Dakota because you just found the secret to conversions.

So you take the magic from that A/B test and apply it EVERYWHERE on your site. The wording, design, call to action, all of it.

Flash forward a couple weeks later and you’re seeing site-wide drops in your conversion rate. What you thought was the Rosetta Stone of conversions just turned out to work for that one specific test.

You just made a generalization and applied it site-wide. And that’s trouble.

The Solution:

Always run a A/B-test before implementing.

This goes hand-in-hand with the last point about running a test against the control (instead of pushing the variant live).

Think of it as a gradual build:

- You have a significant test with big conversion improvements

- You set up a new test on a new page to see if the finding holds true.

- Repeat.

If it’s truly a site-wide game changer, then the tests will continue to prove themselves.

Key Takeaway: Test every time before you make a standard site-wide change.

A/B Test Mistake #8: You Installed Your A/B Test Tool Wrong

There are tons of tools out there for A/B testing. The problem is, it’s hard to find one that can A/B test everything on your site.

So you go through the process of jerry-rigging your tool to test everything. Custom code, new installs, the whole nine yards.

But then things happen. Things like:

-

You put the tracking code in the wrong spot or page

-

One bit of code threw off a bunch of things on your site

-

You didn’t sync up the tracking code with the actual A/B test

-

The A/B test tool doesn’t track the test on a given plugin

It starts to add up. But there’s an easier way to bypass all that and make sure your A/B tests work every time.

The Solution:

Find tools that have built-in A/B testing.

Regular A/B test tools like VWO are great for testing web pages. But if you have popups, Welcome Mats or third party plugins it can be tricky to get accurate results.

That’s why you should find tools that include A/B testing as part of their core functionality.

Take Sumo, for example. If you want to set up a A/B test, it takes less than a minute to create and has all the features you’d want:

-

Stop/Start Test: Click a button and you can start or stop a test whenever you like.

-

Test Frequency: Adjust the slider to change how often a visitor sees a certain variation.

-

Action to Conversion: You can see how many visitors saw the test, how many converted and what the conversion rate percentage was.

-

Statistical Significance: Here’s how confident you can be about your test being valid.

-

Improvement: How much better the test is vs the control.

When your tools have built-in A/B testing, you don’t have to worry about installing a A/B test tool and making it work. It’s one less thing to worry about and it helps ensure you get accurate results site-wide.

Key Takeaway: Find marketing tools that have A/B testing built in.

A/B Test Mistake #9: You Tested Something Insignificant

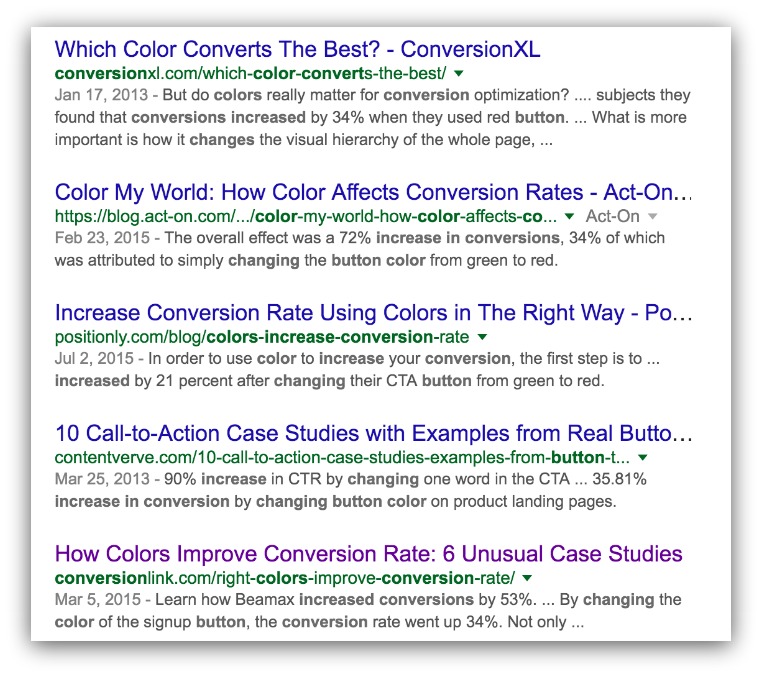

Look what happens when I Google “button color increase in conversions”:

There’s no shortage of content about what color your button should be, or how someone dramatically increased their conversions by changing colors.

If you read this article and rush out to test your button colors, I’ll be forced to flood your inbox with an unrelenting stream of David Hasselhoff pictures. And not the ones from Baywatch, either.

The chance of you finding the silver bullet for button color is rare. If you spend your time testing small things then you miss out on testing the things that consistently bring big increases in conversions.

The Solution:

Test the things that matter.

There are a plethora of things you can A/B test, but there are five easy things you can A/B test right now that are guaranteed to have the greatest effect on your bottom line:

-

Headlines: David Ogilvy said you should spend 80 cents of your dollar on the headline. Nailing a headline that conveys your core message and solves a reader’s problem will absolutely increase conversions. (Hint: The KingSumo plugin helps with that)

-

Call to Action: Whether it’s a button, anchored text or anything else, test the wording of your call to action. This is ultimately what makes a visitor act, so find what best pushes the “act” button in your visitors’ minds.

-

Pricing: It can be scary to test pricing. But this is the page to test if you want to see an immediate increase in revenue.

-

Images: Images are incredibly powerful triggers for conversions. They direct eye movement, appeal to the senses and convey non-written messages. Testing the imagery you use can help feed into more visitors acting on your call to action.

-

Shorter vs. Longer Product Descriptions: This can be in your sub-headline or anywhere else, but it’s basically the copy that describes the benefits and features of your product. Test this copy to get to the heart of what your visitors want.

You can feel confident about A/B testing the right elements when you focus on these five important parts of your site.

Key Takeaway: Test the things that matter.

A/B Test Mistake #10: You Didn’t Outline Your Goal

Why are you A/B testing?

Seriously. Why? Are you doing it because all the big-time marketers tell you to?

Is it because you heard it’ll help get you rich with cashola and email subscribers?

Those might all be reasons as to why you’re A/B testing. But why are you A/B testing any specific thing?

That’s something a lot of people forget to ask when they go down the A/B test road.

There’s no real goal in place. A lot of times it’s a “test stuff on your favorite page until something works” mentality.

That’s like sailing somewhere without a map. And that’s only ever worked for one person.

This guy.

Trust me. There’s a better way to A/B test.

The Solution:

Set a goal.

It’s a common theme in this guide, but setting goals will undoubtedly help you A/B test more efficiently.

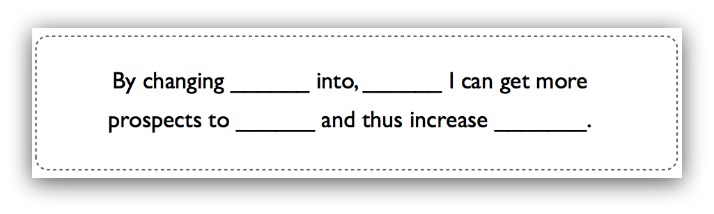

Michael Aagaard from Content Verve has a great way of setting A/B test goals. Here’s his formula:

From Content Verve

This is a fantastic framework to follow. Look how easily it works:

- By changing the call to action on my blog post into an outcome-based call to action I can get more prospects to join my newsletter and thus increase my email list.

Cool, huh? You can do that for any page, and you should.

If you set goals like this from the get-go, you set yourself up for a structured test. It’ll help you better craft your test and stick to what truth you’re trying to find.

Key Takeaway: Set a goal.

A/B Test Mistake #11: You Didn’t Prioritize

If you had a page with 700 daily visitors and a page with 20 daily visitors, which one would you A/B test first?

On the surface, probably the 700 daily visitors page. More traffic = more people to convert. I was a journalism major and even I can do that math.

But you know this is a rhetorical question, so here comes the expected twist:

What if I told you the page with 700 daily visits was a blog post and the page with 20 daily visitors was an enterprise landing page?

That changes it up a bit, doesn’t it?

That’s when the decisions get a bit harder. Like would you rather:

-

Test the pricing tier layout on your pricing page or the call to action on your homepage?

-

Test a high-traffic blog post without a call to action vs a low-traffic landing page for an ebook?

-

Test a headline on your homepage vs an image on your main product page?

Like I said, it can be difficult to determine what you should A/B test first.

The Solution:

Target the easy wins and the high impact pages.

This is a fine line to balance, but it all starts with working backwards from a goal. Are you looking for more revenue? More signups? Having this goal will help you when you select your page to test.

But the best pages are the easy wins and the high impacts.

- Easy Wins:

- High and medium traffic pages that lack something (call to action, headline, email capture, etc.)

- High Impact:

- Pages that are most responsible for growing your email list or revenue.

So let’s go back to the example of the blog post with 700 daily visitors vs the enterprise sales page with 20 daily visits.

Assume there’s no call to action on the blog post. So you test something like a List Builder pop-up to give away a content upgrade. If you assume a 5% conversion rate on that pop-up, you’re looking at 35 email subscribers per day.

Now let’s say your autoresponder sequence can convert 1% of people on the list for a product that’s $35. This is pretty standard.

After one week, you can hypothesize that two people buy your product from that page. So that’s $70 a week from the test.

Not bad. Now let’s take a look at the enterprise sales page. Let’s say that converts at .1% (with the follow ups and calls and whatnot) for a $3,000 product. So it takes 50 days (or seven weeks) to close a sale.

If you could raise that conversion to even .2%, you’re looking at closing a sale every 3.5 weeks.

So in this scenario, you’d want to put your resources into the enterprise sales page. The blog post is an easy win, but the enterprise sales page will have the biggest impact on your revenue.

Some pages might not be an easy win or have high impact like this example. It’s up to you do go through your pages and do this due diligence to see what is worth your time to test.

Key Takeaway: Math (and also look for the easy wins and high impact opportunities).

A/B Test Mistake #12: You Ignored The Data

Tell me, wise reader. What would you do in this situation?:

The control is on the top, while the test is on the bottom. At a 97% statistical significance, it’s safe to say the 10% decrease in conversions from the variant is valid.

So which option would you use going forward?

No, this isn’t a trick question. Of course you’d use the control!

So why is it I see people use the losing variant time and time again?

I’ll tell you why. And I’ll give you five real quotes I’ve heard to defend this ridiculousness (names redacted, of course):

-

“I thought it looked prettier.”

-

“I think this wording aligns better with my brand anyways.”

-

“It wasn’t that big of an improvement anyways.”

-

“I don’t know, I just didn’t like the way it looked on my page.”

-

“Because I know better.”

All these come down to biases and gut calls. The last quote actually comes from a boss (nope, not this guy). I bet you’ve been in a scenario like that where you have to nod your head and do the wrong thing.

So why run the A/B test in the first place if you don’t choose the clear winner?

The Solution:

Trust the data.

If you’ve set up your A/B test the correct way then you can trust the validity of your findings.

Remember, it may look like just numbers. But there are people behind those numbers.

Real people who watch Netflix and eat tacos just like you do. So you shouldn’t discount the decisions they make when the test results come rolling in.

Even if it goes against your design taste or gut feeling (or your boss’ otherworldly knowledge).

Key Takeaway: Let the numbers tell you what’s best.

A/B Test Mistake #13: You Didn’t Settle For The Small Win

Let’s go back to two things from the last A/B test mistake. This:

And this:

- “It wasn’t that big of an improvement anyways.”

That’s a combo-platter problem.

People romanticize A/B testing. They think every test will reveal a 30% increase in conversions.

That’s not the case. You might hit on that one weird trick (sorry) that gets the conversion rate optimization world all hot and bothered.

But those results are few and far between. People don’t write articles about 2% increases. It’s not sexy and that article won’t get clicks.

But a 2% increase? You better believe it’ll make an impact down the road.

The Solution:

Enjoy the small wins.

That’s what A/B testing essentially is. You’re optimizing your pages little by little to see better gains.

The small gains are tough to. But think of it like weight loss:

If this guy got bummed out after losing three pounds after a week of exercise he’d never look like this two years later:

Small gains over time can have a big effect. In the example above I showed you two results: a 2.53% and a 2.27% conversion rate.

We’re not even talking ¼ of an increase in conversion rate. But let’s do some math:

- Assume your site receives 100,000 visitors in a year.

- Converting at 2.53% you get 2,530 signups.

- Converting at 2.27% you get 2,270 signups.

If you weren’t happy with the small gains, you’d be missing out on 260 subscribers.

Even ¼ of a percent increase can pay off.

Key Takeaway: Take the small wins.

A/B Test Mistake #14: You Tested Too Much In The Funnel

So you’ve got your easy wins and high impact pages picked out:

- A landing page

- A product page

- A pricing page.

You’re running two variations against the control for each page, and everything is set up correctly.

Too bad they’re all in the same funnel.

Why’s that a bad thing? Try determining what drove sales and what didn’t.

Imagine that mess. You’ve got three versions of a landing page driving to three versions of a product page leading to three versions of a pricing page.

Was it something on a variant of the landing page that resulted in a sale? Or was it the test on the pricing page that closed the deal?

That’s 27 different paths for a sale.

You may have sound tests on the individual pages, but the path to conversion becomes too muddled to trust a result at the beginning of the stream.

The Solution:

Know your funnels and work back-to-front.

Let’s break that down quick:

-

Know Your Funnels: Map out your paths to conversion and know every page that falls into that path. If you have a landing page driving to a product page leading into the pricing page, map that out and record it.

-

Work Back-to-Front: If you’re going to A/B test anything within a funnel, you need to start from the end and work forward. It’s like a reverse ripple effect — the back of the funnel doesn’t affect the front of the funnel as much as the other way around.

These are the stats that don’t show up in most A/B test tools. You’ll generally get a base conversion rate for a given variable. That won’t account for the different paths your visitor takes to conversion.

By running your tests this way, you don’t have to worry about those variables. It creates a more controlled testing environment.

Key Takeaway: Control your funnel paths and work back-to-front.

A/B Test Mistake #15: You Didn’t Give The Variation A Chance

Let me sum up my college dating career with a phrase I heard a few times:

- “Aw, poor guy. He never had a chance. He should stop always wearing sweatpants.”

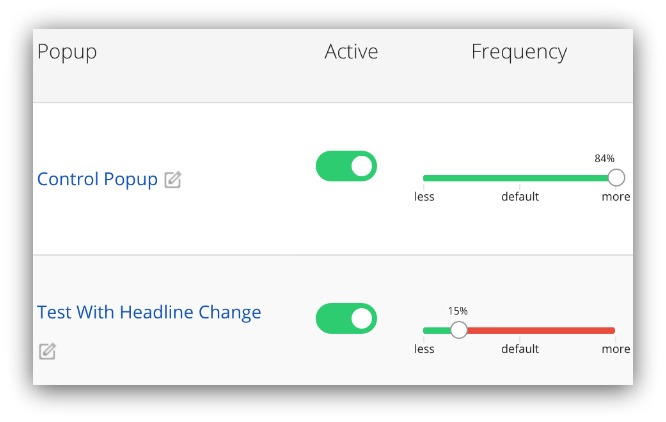

Don’t pay attention to that last part. The first part is what matters. Imagine you ran a whole test like this:

That’s 84% to the control, 15% to the variant and 1% to the A/B Test Gods™.

Do you realize how long it would take to get statistical significance on that test?

A long time. As in over double the normal amount of time for a A/B test with 50/50 traffic A/Bs.

That’s way too long for a test. You’ll either:

- Run the test too short and not get a confident results.

- Run the test forever and waste time/resources.

Neither are desirable. So what do you do?

The Solution:

Make the A/B more even.

Take it from Sumo designer Mark Peck. On top of running design A/B tests on this very site, he used to throw down serious A/B testitude on Booking.com. Here’s what he has to say:

- “Depending on your traffic, uneven A/Bs will lead to your test taking a very, very long time. The only time you should do an uneven A/B is if you’re worried something might break. You could start your test at 95/5 to make sure conversions are coming through and THEN ramp it back to 50/50.”

With most A/B testing tools, you can usually allocate traffic easily. With Sumo it’s a simple slider:

#smooth

That simple allocation can save you tons of time on your A/B tests.

Key Takeaway: Even up your traffic A/B.

A/B Test Mistake #16: You Mis-Read The Results

You might not believe this, but your eyes can deceive you in a A/B test. No matter how much you look with your special eyes.

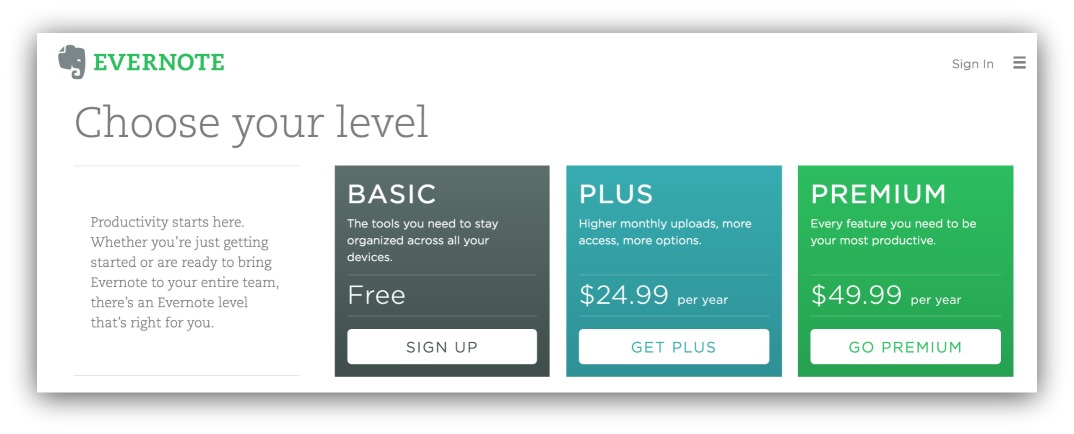

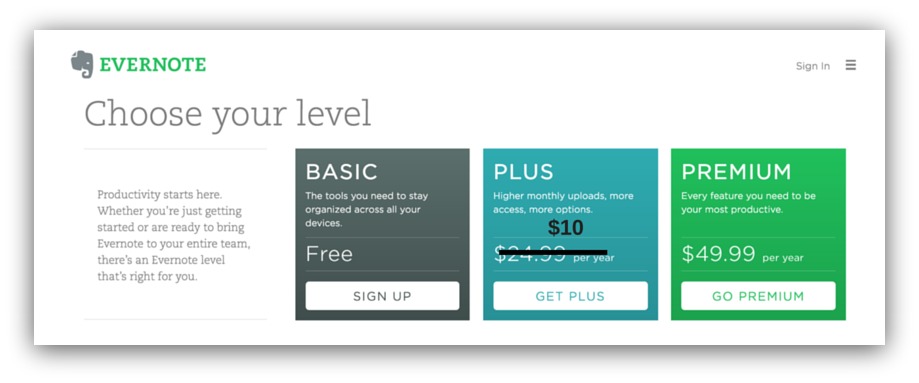

I’ll explain. For the sake of simplicity, let’s focus on pricing pages.

Here’s Evernote’s pricing page. Let’s say they wanted to set up a A/B test where they lowered the cost of Plus to $10:

You can barely tell I photoshopped this

And good news. Their test rocked it! They increase their conversion rate by 4%.

That would be a horrible outcome if they stuck with it.

Why? Because they’d be losing money. If they’re looking at just conversions, they’d be missing out on how much revenue the page actually generates. More conversions doesn’t equal more money.

The Solution:

Look at supporting statistics.

If you rely on conversions alone for things that deal with revenue, you’re going to have a tough time.

You need to see what the total revenue is from that test. You may increase conversions significantly, but you may also decrease your revenue (I know, it still sounds weird).

Take the Evernote example. Say the pricing page sees 500 people per day. It converts at 23% with a A/B of Free/17% Plus/4% Premium/2%. That’s $749.75 in revenue per day.

Then there’s the test where the Plus price is lowered to $10. We’ve got a 4% increase in conversions. Let’s say Free picked up a percent and Plus picked up 3%.

Unfortunately, 1% of Premium users thought the value at Plus was too good, so they switched over to Plus.

Now you’ve got an A/B of Free/18%, Plus/8% and Premium/1%. That’s a total of $649.95.

So conversions went up, but Evernote would lose $100 per day.

When you’re reviewing your A/B test results, don’t forget to look at the bottom line. Conversions may increase, but you may lose money in the process.

Key Takeaway: More math (but also pay attention to other stats besides conversion rate).

A/B Test Mistake #17: Your Test Copy Wasn’t Good Enough

There are 171,476 words in the English dictionary, and you’re supposed to string the right ones together to make people take action.

That’s not daunting, intimidating or dismaying at all.

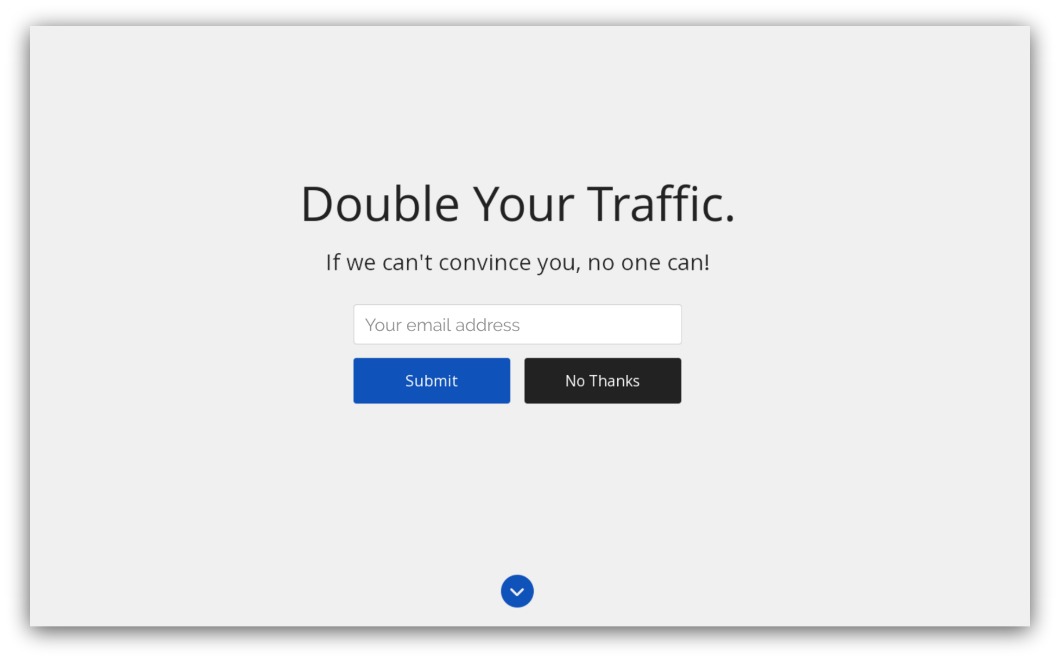

So you sit down at your computer thinking of a new way to increase conversions on your Welcome Mat. You’ve narrowed it down to your call to action, and here’s what you test:

Control

Variable

For the call to action, you decided to test “Submit” vs “Enter.”

Yawn.

Those words are almost interchangeable. What would you prove even if you have a conversion increase? That Enter can move the needle more than Submit?

You can do better than that.

The Solution:

Use completely different language.

If you’re testing copy, you need to try differing or even conflicting messaging in order to test real theories. Let’s try that Welcome Mat call to action again with different copy:

Control

Variable

Ah, much better. Now we’re testing two schools of thought.

The control tests the straightforward approach with a call to action that mirrors the act of completing an action.

The variable tests an outcome-based phrase where the visitor is not just signing up, but signing up for more traffic.

Even if the test doesn’t beat the control, we’d learn that the audience would rather have CTAs be straightforward. That would help tremendously in future A/B tests.

Key Takeaway: Use copy that actually competes.

A/B Test Mistake #18: You Chose A Bad Testing Period

NOW!

I need to A/B test NOW!

You might be thinking that as we come to the close of this guide.

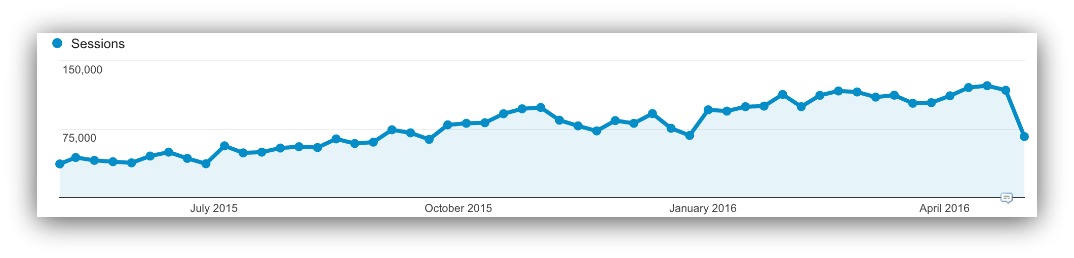

Slow down, partner. Let’s say you’re this company and you decided to test riiiiiiiight here:

Sweet, there’s lots of traffic during that time. You’ll get your test done in half the time!

Wrong.

You don’t want to test during spikes. Spikes in your site indicate irregular behavior. That means visitors are reacting to something that’s not normal.

When you run A/B tests, you them to be as controlled as possible. You want your traffic steady without any bias of deals (massive discounts on your site) or events (Christmas, Black Friday, etc.).

The Solution:

Look to history.

A quick glance at your Google Analytics can tell you when your normal spikes are. For that graph above, it looks like Christmas and Valentine’s Day are

If you see a spike in your historical traffic, investigate it. If it’s something like a piece of content that happened to go viral, that’s fine. You can’t control how wildly popular your writing is.

But if it’s because of a deal you always run during those times, try not to run normal A/B tests during that time. You’ll get thrown off with the irregular traffic.

If we look one year back at Sumo, we see this:

Growth!

Outside of a few dips, our traffic is a steady increase. There aren’t any massive spikes to worry about, so we can deploy a A/B test almost any time.

On top of looking to the past, you need to look to the future.

If you’ve got a marketing calendar, you’re likely aware of any big deals or promotions you’re about to run. You need to anticipate any traffic spikes from those before you run a A/B test.

Looking to the past and the future will help your A/B tests be as controlled and reliable as possible.

Key Takeaway: Run A/B tests during normal traffic times.

A/B Test Mistake #19: You Didn’t Enjoy The Victory

I admit, this is my cliche “end of guide feel-good statement.”

But seriously. No matter the A/B test victory — from .5% to 50% — celebrate it.

A/B testing is hard. If you’ve made it this far in the guide then you know how hard it is to A/B test correctly.

A lot of people make the mistake of running A/B tests like cold-hearted machines. They see a victory and go, “Of course. Move on to the next one.”

Don’t do that.

Share your victory with the team! And if you don’t have a team, share it with your mom! She’ll be stoked for you, even if you increased conversions by .01%.

Feeling the joy of a A/B test victory helps you keep going through the grind of more A/B tests. If you can keep being excited for each and every A/B test, you’ll be able to withstand the losses in the quest for the victory.

19 A/B Testing Mistakes: An Infographic

Want a simplified version of everything in this guide? Check out the infographic below for the 19 A/B testing mistakes (and how to fix them).

You can share it at any time by hovering over the infographic and clicking on the Facebook, Twitter or Pinterest images at the bottom of the infographic. You can also embed the infographic on your site by copying and pasting this code:

Share this Image On Your Site

If you want to go back to the top of the post, click here.

Add A Comment

VIEW THE COMMENTS